Handle duplicate content

Google penalizes URLs with the same contents. This happens the most in Ecommerce websites and some travel websites where the manufacturers prefer to keep the same descriptions of products that are being sold on various websites.

Table of Contents

This is the biggest challenge that Ecommerce websites are facing nowadays. Duplicate content means any kind of plagiarism, content scraping, and manipulation which can quash down the SEO rankings and web traffic and most importantly the positive reputation of your websites.

Duplicate contents may be external or internal. When the contents of one Ecommerce website are the same as that of another Ecommerce website, they are termed as external duplicate contents.

On the other hand, internal duplicate contents lie within an Ecommerce website itself and may arise due to technical glitches or editorial causes within an Ecommerce website. Here is how to handle duplicate content and save your contents from being plagiarized and manipulated.

Maintaining Consistency :

If the structure of your website’s URL happens to be inconsistent there can be high chances of duplicate contents being reported. The best solution to handle duplicate content is to standardize the URL structure and to use the canonical tags properly.

Whatever the URL version you use, either www or the HTTP, they should be consistent. You can set your preferred domain by just logging into the site settings at the right-hand corner of the page and then by setting your preferred domain name.

However, other inconsistencies may also appear in the URL structure. So, along with keeping the structure of the URL simple, you also need to keep the syntax and other parameters of the URL correct.

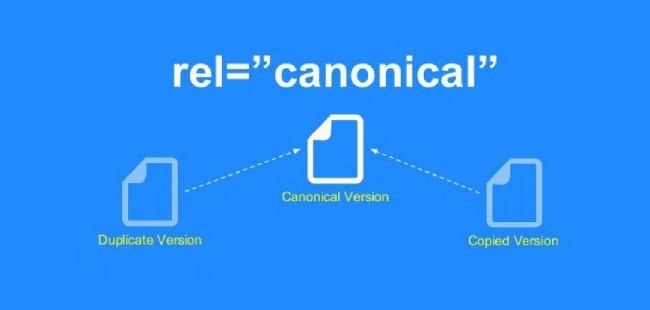

Canonicalization :

This is yet another method to handle duplicate content. When users search by tags and categories of products, Google often shows the same results. This happens the most with Ecommerce websites which irks the customers often getting the same search results every time.

The same contents in multiple websites confuse Google which URL to show in the search results. To cope up with this problem, Google recommends the site owners to use canonical tags to their contents. This helps the search engines to link back to the original resource after they find the canonical tags.

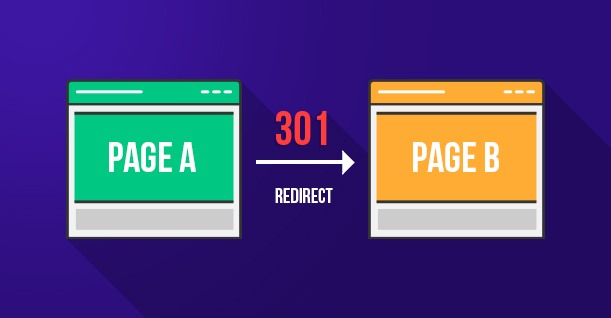

01 redirects :

Sometimes a good endeavor like the restructuring of the URL can also cause duplicate contents. So, make sure to follow the guidelines set in by Google while you restructure your links. With the help of 301 redirects, you can alert the search engines about your preferences and it is a great way to stay away from duplicate contents.

Even if the search engines land in a web page containing duplicate contents, seeing the 301 redirects it can reach to the original resource page. The SEO rankings are not lost if there are 301 redirects included in each duplicate page. You can set up 301 redirects depending on the nature of your website.

Content syndication :

In the case of Ecommerce sites, several contents get republished across various platforms. In such a case, Google recommends republishing websites to use anchor texts back to their original sites whenever the same contents are repeated. This is one of the best ways to handle duplicate content.

Google search console tools :

Google search console tools are very easy to set up on your website and they identify duplicate and thin contents readily thus saving the Ecommerce websites from the most time-consuming task. Here are some ways discussed below by which the Google search console tools may be used for identifying duplicate contents.

HTML improvements– URLs having duplicate title tags and meta descriptions can be pointed out easily.

URL parameters – If there exist any issues of crawling into and/or indexing for a particular website, Google will readily identify such issues and fix them instantly.

Search Query operators :

This is yet another effective way to handle duplicate content using Google. These are the following operators.

Site: – is a google operator that shows most of the URLs that are indexed by Google from your website. This is an extremely effective process to find out whether Google has an excessive number of URLs indexed from your website or not.

Inurl: – This operator is generally used along with the site operators to find out particular URL parameters that are indexed by Google. This help in ruling out potentially harmful parameters indexed by Google.

Title: These Google operators show specific URLs that are indexed by Google having specific meta titles in their tags. In Ecommerce websites, this operator helps in identifying any duplicate contents in the product page that also contains reviews on a separate page.

Plagiarism tools :

These are third-party tools that help in scraping out the duplicate contents from a website. 3 such tools are discussed here.

Copyscape :– This tool identifies editorial duplicate contents contained in various websites. It can crawl the sitemaps of various websites comparing all the URLs included in it with others in Google’s index to find out if any editorial contents are being plagiarized or not. Copied and pasted product descriptions can be detected at ease.

Screaming frog : – This is a very popular tool with highly advanced professional features. It can easily crawl to a website and detect technical issues if any that may arise due to duplicate contents and error messages.

Siteliner : – This tool identifies the internal duplicate contents on the various web pages of the Ecommerce website.

Experience and intuition

Apart from the above-mentioned tools and tips that help in identifying duplicate contents, your years of expertise and experience can never go wrong when it comes to detecting duplicate contents. As you continue to work upon these issues, your mind inculcates itself in such a way that any duplicate contents get identified easily in your eyes.

Duplicate content is inevitable in the web world. This is because ideas cannot be recreated and so whenever a journalist is quoting an article from several other articles, a few duplicate contents come along with it.

But measures should be taken not to entertain duplicate contents as they will exponentially reduce the SEO ranking of a website and subsequently the web traffic will be drifted sideways.